PathMaker Group has been working in the Identity and Access Management space since 2003. We take pride in delivering quality IAM solutions with the best vendor products available. As the vendor landscape changed with mergers and acquisitions, we specialized in the products and vendors that led the market with key capabilities, enterprise scale, reliable customer support and strong partner programs. As the market evolves to address new business problems, regulatory requirements, and emerging technologies, PathMaker Group has continued to expand our vendor relationships to meet these changes. For many customers, the requirements for traditional on premise IAM hasn’t changed. We will continue supporting these needs with products from IBM and Oracle. To meet many of the new challenges, we have added new vendor solutions we believe lead the IAM space in meeting specific requirements. Here are some highlights:

IoT/Consumer Scalability

UnboundID offers a next-generation IAM platform that can be used across multiple large-scale identity scenarios such as retail, Internet of Things or public sector. The UnboundID Data Store delivers unprecedented web scale data storage capabilities to handle billions of identities along with the security, application and device data associated with each profile. The UnboundID Data Broker is designed to manage real-time policy-based decisions according to profile data. The UnboundID Data Sync uses high throughput and low latency to provide real-time data synchronization across organizations, disparate data systems or even on-premise and cloud components. Finally, the UnboundID Analytics Engine gives you the information you need to optimize performance, improve services and meet auditing and SLA requirements.

Identity and Data Governance

SailPoint provides industry leading IAM governance capabilities for both on-premise and cloud-based scenarios. IdentityIQ is Sailpoint’s on-premise governance-based identity and access management solution that delivers a unified approach to compliance, password management and provisioning activities. IdentityNow is a full-featured cloud-based IAM solution that delivers single sign-on, password management, provisioning, and access certification services for cloud, mobile, and on-premises applications. SecurityIQ is Sailpoint’s newest offering that can provide governance for unstructured data as well as assisting with data discovery and classification, permission management and real-time policy monitoring and notifications.

Cloud/SaaS SSO, Privileged Access and EMM

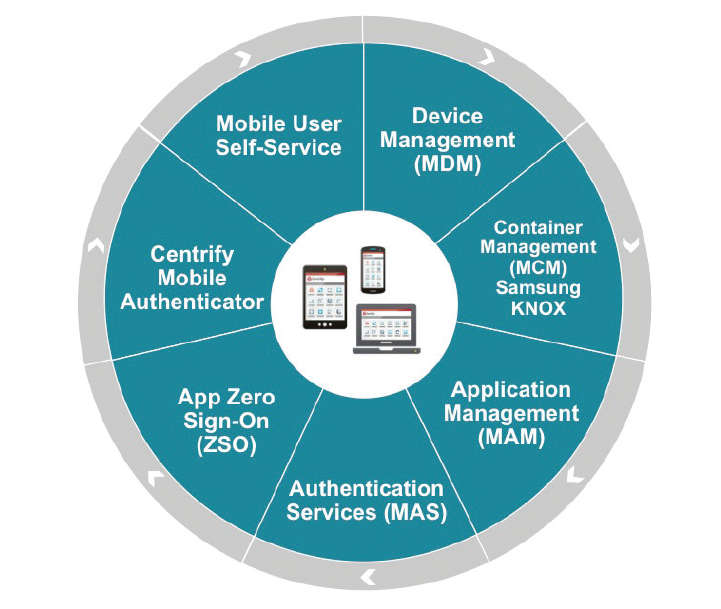

Finally, Centrify provides advanced privileged access management, enterprise mobility management, cloud-based access control for customers across industries and around the world. The Centrify Identity Service provides a Software as a Service (SaaS) product that includes single sign-on, multi-factor authentication, enterprise mobility management as well as seamless application integration. The Centrify Privilege Service provides simple cloud-based control of all of your privileged accounts while providing extremely detailed session monitoring, logging and reporting capabilities. The Centrify Server Suite provides the ability to leverage Active Directory as the source of privilege and access management across your Unix, Linux and Windows server infrastructure.

With the addition of these three vendors, PMG can help address key gaps in a customer’s IAM capability. To better understand the eight levers of IAM Maturity and where you may have gaps, take a look this blog by our CEO, Keith Squires about the IAM MAP. Please reach out to see how PathMaker Group, using industry-leading products and our tried and true delivery methodology, can help get your company started on the journey to IAM maturity.

Over the past 12-18 months, there has been a mounting interest in the next generation of IAM systems. The promises of decentralized and self-sovereign identity promote a frictionless user experience, improved privacy controls, and appeal to organizations looking to reduce both costs and risks. How do you get started? Many organizations are just starting their journey to cloud, so the idea of a decentralized identity may seem too futuristic.

Over the past 12-18 months, there has been a mounting interest in the next generation of IAM systems. The promises of decentralized and self-sovereign identity promote a frictionless user experience, improved privacy controls, and appeal to organizations looking to reduce both costs and risks. How do you get started? Many organizations are just starting their journey to cloud, so the idea of a decentralized identity may seem too futuristic.

Mobile has become the de facto way to access cloud apps requiring you to ensure security and enable functionality of users devices. This includes deploying appropriate client apps to the right device and ensuring an appropriately streamlined mobile experience. Unfortunately, most existing Identity and Access Management as a Service (IDaaS) solutions fall short when

Mobile has become the de facto way to access cloud apps requiring you to ensure security and enable functionality of users devices. This includes deploying appropriate client apps to the right device and ensuring an appropriately streamlined mobile experience. Unfortunately, most existing Identity and Access Management as a Service (IDaaS) solutions fall short when